Any number of philosophical disputes arise in the courts, and legal cases make for excellent discussion springboards.

_________________________

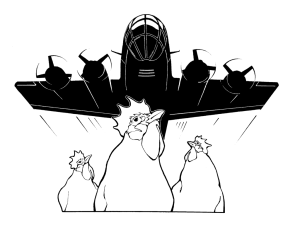

U.S. v. Causby

328 U.S. 256 (1946)

Justice William O. Douglas, who wrote this legal opinion, observed, “It is an ancient doctrine that at common law ownership of the land extended to the periphery of the universe. . . But the doctrine has no place in the modern world. The air is a public highway.”1 Indeed, if everyone’s property rights extended upwards infinitely, commercial aviation would be an impossibility, with lawsuits ensuing from every flight.

In this case, Mr. Causby was a chicken farmer whose property lay near the end of a Greensboro, North Carolina runway, where bombers and other military planes landed day and night, missing his chicken house by only 63 feet. It drove the birds crazy, and over half of them bashed themselves to death against the barn walls in a panic. The Causby family was driven to distraction as well, not only from the collapse of their business, but also from the exhausting noise and, at night, light. And so he sued to protect his to-the- heavens airspace . . . and lost.

Whatever the justice of it all, ancient doctrine citing “the periphery of the universe” raises the interesting metaphysical question of what shape that ownership might take. The full doctrine says property rights extend downward as well, so one can imagine something of an inverted cone or pyramid terminating at the center of the earth, where property lines from China would meet those from America, each coming to a fine point. But what about the other direction with its upward boundaries? Would it rise in the shape of a silo the width of the property on the earth’s surface? And how far would it go? The answers concern the metaphysics of space.

First, does the universe have a periphery? Say we go out a gazillion miles and hit some sort of terminus to what we view as outer space, the darkness from which the nebulae and constellations shine forth in the night sky. Do we hit a wall of sorts? If so, how thick is the wall? Isn’t it spatial as well? And, unless it’s infinitely thick, there’s something on the other side which continues the expanse. So shall we say that Causby’s property is infinitely large?

And what do we make of claims that space is “curved”? We get sort of statement from those who’ve imbibed Einstein’s relativity theorizing, with its talk of a “space-time continuum.” Textbook illustrations show a range of models, such as a steel ball resting on a flexible rubber sheet (the earth’s gravity bending light, whose speed is “the constant”) or something like a saddle-blanket draped over the back of an invisible horse. If space is really bent, then Causby’s “silo” could be curved rather than straight sided.

But those illustrations lie in the space of a book’s page, which strikes me as a better representation of space itself, with the curved illustration speaking more to the behavior of bodies within certain regions of space, and with the understanding that the page extends infinitely in not only two but three dimensions. The relativity math can be helpful, even indispensable, in sending out deep-space probes and such, but, in the end, they don’t give us the final word on capital-S Space. (And, incidentally, the speed of light — 186,000 miles per second — is not an absolute constant but a contingent one. It could be 220,000 mps, or 27 mps, depending on God’s good pleasure.) Science is good at helping us get around, but it’s not equipped to address ultimate realities.

Metaphysics is devoted to sorting out the reality beyond and behind appearances, and there are plenty of wacky appearances to get behind and beyond, e.g., mirages; the Doppler effect; the sense that you continue to spin after you’ve stopped; déjà vu; the impression that your train is moving when it’s actually the neighboring train. Similarly, the apparent curvature of space can be a useful, albeit puzzling, fiction (or a useful reality if you define ‘space’ a certain way), but it is ultimately nonsensical. We can’t think it, and so we shouldn’t say it, unless we’re doing something specialized, where an as-if claim can help our math.

Immanuel Kant had an interesting take on this. He called space and time “pure forms of intuition,” the theater, if you will, in which phenomena play, subservient to such “categories” as causality and plurality. On this model, space isn’t a thing but rather a perspective from which we see (touch, taste, smell, hear) all physical objects. It’s that by which we experience things and not a thing in itself.

Fortunately, the Supreme Court excused Causby from these ruminations and contestations. Otherwise, the case could have run on through the ages as the parties brought in rival “philosophically expert witnesses” (an oxymoron, I fear) to settle the matter. Meanwhile, Causby might have shifted his complaint from the Greensboro bombers to the Cassini-Huygens Saturn probe, dragging NASA into court.

__________

1 The common law expression being Cujus est solum ejus est usque ad coelom, which Douglas quoted in the decision. He left off et ad infernos, which would make the sentence read, “Whoever owns the soil, it is theirs up to heaven and down to hell.” It was, indeed, a long-standing principle, applied for example in the 1587 British case, Bury v. Pope, where a homeowner protested, unsuccessfully, his neighbor’s decision to build a high structure which blocked out sunlight. The court ruled (citing “Cujus est solum . . .”) that he had the right to build as high as he wished on his own property.

_________________________

People of the State of California v. Orenthal James Simpson

People of the State of California v. Orenthal James Simpson

Los Angeles Country Superior Court (1995)

For almost a year, Americans were riveted by the O.J. Simpson case. They’d seen the bloody crime scene photos and the slow-motion “chase” down an LA freeway, with Al Cowlings driving the suspect home in a white Bronco, trailed by a host of cop cars. Taken into custody, the ex-NFL star was charged with the knifing deaths of his estranged wife Nicole and a restaurant employee who was returning her glasses, Ronald Goldman.

Over the months of the trial, and thanks to TV coverage, we became familiar with an intriguing cast of characters, including Kato Kaelin, who lived in an apartment out back; Judge Ito; the prosecutors, Marcia Clark and Chris Darden; the defense attorneys, Johnnie Cochran and Robert Shapiro; detective Mark Fuhrman. And it seemed that most of the nation was glued to the screen when Simpson’s acquittal was telecast at 10:00 a.m. (PST), October 3, 1995.

Though released that day, Simpson was hit, in 1997, with a successful “wrongful death” civil suit by the Goldman family. He wasn’t in a position to pay the millions in penalties assessed, though he raised some money through auctioning memorabilia. Then, in 2007, he joined in a robbery to reclaim some items he felt were his, and he now serves time in a Nevada jail for that offense.

I think it’s fair to say that most observers think Simpson “got away with murder” in the criminal trial, though Cochran and Shapiro wrote books defending the decision. (Clark and others have written books to the contrary.) The evidence, some of which was suppressed in the initial trial, was damning, including DNA analyses of crime-scene blood and matching shoe prints.

Some say the jury composition was decisive, in that 8 of the 12 members were black, that the defense attorney Johnnie Cochrane “played the race card,” discrediting the testimony of Detective Furhman (whom he once typified “a genocidal racist, a perjurer, America’s worst nightmare and the personification of evil”), and that Los Angeles was stewing from the police beating of Rodney King. Indeed, there was a level of telecast celebration in the black community, some even saying that it was high time that a black man likely guilty of black on white crime was released after so many whites guilty of violence toward blacks had been exonerated – “pay back,” if you will. (Some also say the fact there were only two college graduates on the jury reduced the impact of technical DNA testimony.)

Be that as it may, “mistakes were made” in the prosecution itself, most dramatically in allowing Simpson to try on the glove once soaked in blood and repeatedly frozen by its caretakers in the evidence process. With Johnnie Cochran declaring “If it doesn’t fit, you must acquit,” Simpson more or less struggled to put on the glove, the difficulty likely enhanced by the glove’s soaking and drying and perhaps by his failure to take his anti-inflammatory arthritis medicine.

In the end, the jury didn’t take long to decide that the prosecution had failed to make their case for Simpson’s guilt “beyond a reasonable doubt” – less than three hours of deliberation after over eight months of trial. Which raises the question of how much doubt is reasonable.

Seventeenth-century French philosopher, Rene Descartes, argued that maximum doubt was reasonable, at least as a beginning point. Having heard all sorts of competing knowledge claims, seen man’s susceptibility to illusion and delusion, and wanting to find a solid foundation on which to build all respectable belief, he doubted everything he could, including God’s existence and the reality of the physical world (as opposed to a dream state). He discovered that the one thing he couldn’t doubt was that there was some doubting going on, so there must be a doubter, namely himself.

That’s not a lot to know, of course. The person who goes around saying that the only thing he really knows is that he exists as a doubter is a useless bore. Fortunately, Descartes thought he’d found a way out of his state of minimal – indeed, scarcely discernible – confidence. He figured that an infinite being had to be the cause/source of his thoughts of infinity (you can’t get a greater than a lesser) and that this infinite being (obviously, God) must be perfect and thus disinclined to let us be systematically fooled by what seems to be a genuine world. So, using his reason, he climbed his way back to the surface where he could work and converse with those who hadn’t gone through his descent to the abyss where everything was up for grabs.

Turns out, the world of philosophy was more impressed with his downward climb into skepticism than with the ladder he used to get back out. And they admired his search for something indubitable. “Reasonable doubt” wasn’t satisfying; they wanted “impossible doubt.” And so Foundationalism was born.

Some would find certainty in sense experience at its most basic level, e.g., “I’m being appeared to redly.” Others we’re keen on such principles as “Everything has a sufficient reason for its existence and behavior” or “You can’t both have X and not-X.” And they would get busy building on these certainties. But then they would be confronted with fresh onslaughts from the skeptics, wondering, for instance, how you could know you were using the word ‘redly’ the same as you did yesterday, whether some of these necessities were really just definitional items or policy resolutions and not descriptions of the world.

The bigger question was whether iron-clad certainty was worth pursuing in the first place. Wasn’t it enough that a claim be plausible? Could you really have more than probability? After all, didn’t we work that way all the time, giving guesses our best shot and checking things out in the aftermath to see if our convictions could stand the test of time? Weren’t we really following what philosophers called “Inference to the Best Explanation”? If so, maybe Descartes sent us on a multi-century, wild goose chase.

This isn’t to say that doubt is a bad thing in itself — just that you can overdo it, as with the Simpson jury. Indeed, while they said the government lawyers failed to demonstrate Simpson’s guilt “beyond a reasonable doubt,” I’m more inclined to say that their failure to deliver reasonable justice is manifest, “beyond a reasonable doubt.”

Kitzmiller v. Dover Area School District

Kitzmiller v. Dover Area School District

U.S. District Court for the Middle District of Pennsylvania

400 F. Supp. 2d 707 (2005)

Not thrilled that the state of Pennsylvania required the teaching and testing of students’ grasp of Darwin’s theory of evolution, the Dover, Pennsylvania, school board passed a measure requiring the ninth grade biology teachers to read a statement saying that Intelligent Design was an alternative account of the origin of life, and to let the students know that the ID book, Of Pandas and People, was available to them. With the help of the American Civil Liberties Union and the Americans United for Separation of Church and State, eleven parents, including the eponymous Tammy Kitzmiller, sued for removal of this statement. The Thomas More Law Center took up the cause of the plaintiffs. The trial featured a range of disputing experts.

In the end, Judge John E. Jones sided with the ACLU, saying that the ID movement was really an agent of fundamentalist creationists, who wanted to use it as a “wedge” to get Bible indoctrination into the public schools. Besides, he argued, it wasn’t science at all, and so it had no place in the science classroom.

He held that true science shunned reference to the supernatural, that it insisted on empirically verifiable or falsifiable statements, and that “methodological naturalism” was the only way to go in speaking of origins. To open the door to ID would be to supplant science with the “establishment of religion” in violation of the First Amendment.

In due course, Judge Jones was honored by the American Humanist Association with their religious liberty award, and by the Geological Society of America, which gave him their presidential medal. Not so impressed were conservative commentators, Bill O’Reilly, Ann Coulter, and Phyllis Schlafly . . . and distinguished philosopher Alvin Plantinga.

Plantinga said that the statement, “God has designed 800-pound rabbits that live in Cleveland” was “clearly testable, clearly falsifiable and indeed clearly false.”(1) So reference to God doesn’t disqualify a statement from empirical validity. Furthermore, statements of particular design are no more immediately confirmable or disposable than the claim that “there is at least one electron.” Rather, both are nested in broader conceptual schemes, which rise or fall as a package of understanding (or misunderstanding). And in this connection, ID is a serious player.

Plantinga went on to argue that the judge had succumbed to (or arrogated to himself the privilege of) arbitrary stipulation:

Suppose I claim all Democrats belong in jail. One might ask: Could I advance the discussion by just defining the word “Democrat” to mean “convicted felon”? If you defined “Republican” to mean “unmitigated scoundrel,” should Republicans everywhere hang their heads in shame? So this definition of “science” the judge appeals to is incorrect as a matter of fact because that is not how the word is ordinarily used. But even if the word “science” were ordinarily used in such a way that its definition included methodological naturalism, that still wouldn’t come close to settling the issue. The question is whether ID is science. That is not a merely verbal question about how a certain word is ordinarily used.

Of course, Jones had all the encouragement he needed to do this from the plaintiff’s “experts.” Indeed, this is a conceit widely held by materialistic scientists, as we see from this rare, candid statement by Harvard evolutionist, Richard Lewontin. The prof let this slip in a New York Review of Books book review back in 1997, and Berkeley law professor Phillip Johnson brought it to light that same year in a First Things article:

We take the side of science in spite of the patent absurdity of some of its constructs, in spite of its failure to fulfill many of its extravagant promises of health and life, in spite of the tolerance of the scientific community for unsubstantiated just-so stories, because we have a prior commitment, a commitment to materialism. It is not that the methods and institutions of science somehow compel us to accept a material explanation of the phenomenal world, but, on the contrary, that we are forced by our a priori adherence to material causes to create an apparatus of investigation and a set of concepts that produce material explanations, no matter how counter- intuitive, no matter how mystifying to the uninitiated. Moreover, that materialism is absolute, for we cannot allow a Divine Foot in the door. The eminent Kant scholar Lewis Beck used to say that anyone who could believe in God could believe in anything. To appeal to an omnipotent deity is to allow that at any moment the regularities of nature may be ruptured, that miracles may happen.(2)

And we couldn’t let that happen, could we.

So the game is rigged, and Judge Jones was in on the scam, perhaps unwittingly, but certainly self-congratulatorily.

To show the arrogance of this understanding of science, I pose a forensic medicine case, one involving a dead jogger in Central Park. Though there are no signs of foul play, and it’s assumed to be an instance of heart failure, one detective has his doubts – and he’s right. An extremely clever assassin had insinuated a tiny, pin-prick, hard-to-detect-poison device in the runner’s left shoe, and a microscopic hole under the second toe ultimately reveals the entry lethal entry point. Though his colleagues laugh at him for his conspiracy theory, he persists, insisting that another party was involved. And in doing so, he doesn’t cease to be scientific.

That’s what the ID people are saying, in effect – that somebody was involved in engineering the circumstances before us. And, by the way, we’ll meet him one day. In fact, his existence makes the most sense of what we see before us, and the agnostic position seems to be the one requiring the most strain to maintain.

Of course, Judge Jones and Kitzmiller and the ACLU will disavow any interest addressing the existence of God and his sovereignty. They’re just standing up for the integrity of science. Never mind that revolutionary scientists from Newton to Leeuwenhoek to Mendel freely associated their work and findings with divine, “intelligent design.” They’ve moved beyond that to the high country of naturalistic insulation/isolation. (One wonders if they’d say “Shut up” to a brain surgeon who finds no tumor the day after the MRI had announced a golf-ball-sized malignancy, and then exclaims, “It’s a miracle. They said they were praying for one, but I didn’t think it was possible.” Would he have to turn in his scientific credentials on grounds of epistemological treason?)

One last thing: Judge Jones prides himself on uncovering an ID strategy of advancing biblical creationism by means of a “wedge” strategy. But so what? What business does he have judging the motives of the parties? Why couldn’t he just as well fault the plaintiffs for their ulterior interest in undermining belief in the Creator God? “Oh yes, they may dress up their atheism in fine talk of science and all that, but we know what they’re up to.” How would that fly? Not far. But that’s the sort of irrelevancy he entertains to the contrary. A sorry spectacle.

____________________

(1) http://www.evolutionnews.org/2006/03/philosopher_alvin_plantinga_de002054.html

(2) Phillip E. Johnson, “The Unraveling of Scientific Materialism,” First Things, November 1997.

_______________________________

McGuinn v. Clarke

McGuinn v. Clarke

United States District Court for the Middle District of Florida

Docket # 8:89-CV-DV-00518 (Filed: April 13, 1989; Closed: June 9, 1989)

Beginning with five members in 1964, the rock group, the Byrds, went through a host of permutations over the next 36 years, with 11 different men serving with the band in its first ten years. Only Jim McGuinn was there throughout the changes, and he, himself, evolved spiritually to the point that he changed his name to Roger under the tutelage of a religious advisor of the Subud faith, a form of mysticism founded in Indonesia in the 1920s. Some who left the group (David Crosby in 1967, Gram Parsons in 1968) went on to careers with other bands (in the former case, Crosby, Stills, Nash and Young; in the latter, the Flying Burrito Brothers). But Michael Clarke, who left in 1967, attempted, in the late 1980s, to appropriate the Byrds’ name with his own band, including another original member, Gene Clark. At this provocation, Roger McGuinn joined with Crosby and Chris Hillman, yet another original member, who left in 1968, in suing on grounds of false advertizing, unfair competition, and deceptive trade practices.

So, we may ask, in 1989, who were the real Byrds? The Clark/Clarke version had two of the original members. The McGuinn version, only one. And besides, we may ask, was McGuinn the same McGuinn who started with the band, or had his soul transformation made him a different person, meaning that, at a deeper level, there were no founders present in the “McGuinn” band in 1989?

In this vein, philosophers have wrangled for ages over the essential properties of a thing. The Greeks puzzled over the case of a ship which had been restored one plank at time. Aristotle said it was the same if the “formal cause,” the design was identical. But what of two exact replicas? And a simpler case involves the notion of “grandfather’s ax,” which, through the years, has had the head and handle replaced at different times? Is an item of physical continuity the key? A big arena for this discussion concerns the nature of personal identity – what makes you you? Classic and medieval philosophers spoke of each man’s having a soul (the latter informed by biblical attestations), but the 18th century Scottish philosopher David Hume said he couldn’t discover his own soul through introspection. As he wrote in Treatise of Human Nature:

“For my part, when I enter most intimately into what I call myself, I always stumble on some particular perception or other, of heat or cold, light or shade, love or hatred, pain or pleasure. I never can catch myself at any time without a perception, and never can observe anything but the perception.”

So he gave us a “bundle theory” of the soul, a rope, if you will, of experiences with no single fiber or strand running the length of the line. This stood in opposition to the conviction that the soul was like a wire, with an unbroken thread of metal running though it all.

Of course, this raised the question of why these were my experiences and not somebody else’s, and the major Humean critic, the German Immanuel Kant, answered by postulating what he called the “transcendental unity of apperception,” the necessary ground for the ownership and organization of experiences. The soul’s not a thing seen, but rather the means by which our seeing is possible or orderly.

Earlier, in Britain, 17th century philosopher John Locke, said that memory was the principle of ownership so to speak. Remembrance ties things together for the individual. Even though I’m only currently aware of my present headache, the headache I remember having a week ago is mine by virtue of its presence on my “hard drive” of consciousness. So we’re not created anew in each instant. We have a story, which makes each chapter an instance of us.

Of course, the question naturally arises, “How about amnesia?” If the person can’t tie his current existence to the past, how can he be the same person? And what of his eternal destiny if he dies before sorting out his faith, having forgotten that he made a commitment to Christ in his “former life”? The same goes for a person who’s fallen into radical dementia? In what sense is he still a disciple? (A Christian would trust that God does the sorting and saving, and he keeps track of his own, even when they can’t keep track of themselves.)

It works the other direction, too. When a person is saved, he becomes, according to the Bible, a “new creation.” He is “born again”? How then, is he the same person? Well, certainly, we say, colloquially, that “he’s a completely different man,” but we also say, colloquially, that “he used to be wild,” implying that it was the same person back then, but with a different character.

I think it’s reasonable to say that Bob is still Bob, but with a different character and temperament. The “entirely new person” talk is hyperbole, but with a good point.

Materialists, of course, tie personal identity to the physical body, but a thought experiment can challenge that conceit. Playing off illustrations in the literature, I ask my seminary students to imagine that a classmate slumps over dead, but that in the midst of our fruitless efforts at resuscitation, one of us gets a call from someone claiming to be that very person who died before our eyes. He expresses his astonishment that he’s standing in front of Buckingham Palace in a red uniform and bearskin hat, but he insists that he’d just arrived in that body and that he was in class only a few minutes earlier.

Puzzled in extremis, we gather our wits as best we can and start asking questions: “Who was sitting on the back row and asked about whether we should double-space or single-space the term paper?” “Bob Falkenberry.” “Okay, who was sick today and had to miss class?” “Rupert Davis.” “Try this: Dr. Coppenger told a joke about what?” “The Jeff Foxworthy one where he says that you may be a redneck if the last words of a close relative were ‘Hey, y’all, watch this.’” “Well, yes.”

And so on it goes, checking out at every turn. Would it be so crazy to say the palace guard was the same person, yet in a different body? And might this not also be the case if all the memory checks turned out positive, but now the fellow cursed like a sailor and said he has no use for Christ? Couldn’t we say he’s changed bodies and had a transformation in spirit and conviction, but that he’s still our acquaintance?

This sort of thing keeps philosophers busy. But it also should intrigue the man on the street, or in the pew. What is your essence, both as a human and an individual? And how much of what you currently are – body, conscience, convictions, dispositions, memories, associations, appearance – could drop away without your ceasing to be the same person?

________________________

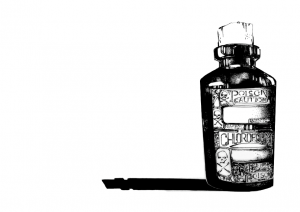

Repouille v US

Repouille v US

165 F.2d 152 (1947)

In October of 1939, Louis Loftus Repouille chloroformed his 13-year-old son to death. Four years and eleven months later, he applied for U.S. citizenship and ran afoul of the Nationality Act’s rule that those seeking naturalization must exhibit “good moral character” for the five years preceding the application. If he had waited another month or so, he wouldn’t have had the same problem. Nevertheless, the federal district judge said Repouille was close enough to pass, but U.S. Attorney in New York objected, and the case moved up to the Circuit Court of Appeals, where the justices said they didn’t have the data to make the proper call. They sent it back to the lower court with instructions that they do their homework and try again. The assumption was that Repouille would again be approved since another three years had passed, and the five-year rule was well-satisfied.

But what sort of homework did they have in mind? Well, they were appealing to a standard applied in an earlier, Massachusetts case, one which tied judgment to the question of whether “the moral feelings, now prevalent generally in this country” would “be outraged” by the conduct in question: that is, whether it conformed to “the generally accepted moral conventions current at the time.”

Judge Learned Hand, who wrote the opinion, left little doubt as to his own lack of “outrage” as he described the situation:

[Repouille’s] reason for this tragic deed was that the child had “suffered from birth from a brain injury which destined him to be an idiot and a physical monstrosity malformed in all four limbs. The child was blind, mute, and deformed. He had to be fed; the movements of his bladder and bowels were involuntary, and his entire life was spent in a small crib.” Repouille had four other children at the time towards whom he has always been a dutiful and responsible parent; it may be assumed that his act was to help him in their nurture, which was being compromised by the burden imposed upon him in the care of the fifth. The family was altogether dependent upon his industry for its support.

Furthermore, he noted the lack of enthusiasm for harsh judgment among the original jurors:

Although it was inescapably murder in the first degree, not only did they bring in a verdict that was flatly in the face of the facts and utterly absurd for manslaughter in the second degree presupposes that the killing has not been deliberate but they coupled even that with a recommendation which showed that in substance they wished to exculpate the offender.

Instead of faulting the jurors, he compared them to other righteous, civilly disobedient figures, such as the Abolitionists. And he underscored his own sympathy for Repouille by declaring,

[W]e all know that there are great numbers of people of the most unimpeachable virtue, who think it morally justifiable to put an end to a life so inexorably destined to be a burden to others, and so far as any possible interest of its own is concerned condemned to a brutish existence, lower indeed than all but the lowest forms of sentient life.

He wondered out loud how one might take a proper reading on the national conscience, even mentioning the possibility of a Gallup poll.

One must ask whether Justice Hand had a firm grasp of the Bill of Rights, which were meant as a sea wall against the tides of public opinion, for there are any number of occasions when the public would not be “outraged” over discrimination toward those with strange religious beliefs (e.g., Santeria), repellant opinions (Holocaust denial), absurd associations (Flat Earth Society), or a different racial mix. In my own lifetime, it was illegal in some states for a black and a white to marry, and there was a day in America when the institution of slavery was perfectly acceptable to many. It’s hard to see where things would stop if the standard were based on “the moral feelings, now prevalent generally in this country.” Lynching enjoyed wide favor it some regions, and one has only to look around the world to see what damage the tyranny of the majority can do when the majority has lost (or never managed to secure) its moral bearings. The public conscience is fluid while the moral verities are fixed, and by tying the work of the court to the former rather than the latter, Hand did the law a disservice.

Be that as it may, this case puts focus on a concept philosophers have studied for millennia, the notion of “good moral character.” It’s returned to recent prominence through the discussion of virtue, thanks in large measure to the work of Alasdair MacIntyre (his landmark book After Virtue, appearing in 1981). In the previous decades, ethical discussion was devoted to cases and issues, e.g., whether the Vietnam War was immoral, capital punishment unjust, abortion murder, or capitalism cruel. Focus was on the act, but then philosophers began to talk about the actor.

In doing so, they often returned to Aristotle’s Nicomachaean Ethics, wherein this ancient Greek philosopher located the virtuous “Golden Mean” between two unsavory extremes. For instance, courage was a good thing, but too much of it – recklessness – wasn’t; nor was too little of it – cowardice. The same went for a sense of humor, which found its proper place between the extremes of silliness and coldness. And one can run down the list of good personal qualities — whether patience, industriousness, kindness — easily identifying those who overdo or underdo things. Of course, the Golden Mean doesn’t apply to manifest evils, such as adultery, where it makes no sense to talk of too much or too little of it.

A strong feature of Aristotle’s theory is that virtue is a habit you can cultivate, and that the easier it comes to you, the more virtuous you are. Thus, though one may think that the person who fights against gluttony every step of the way is more heroic than the one who has no appetite for it (pun intended). But the one who’s developed a spirit of moderation warrants the greater admiration. It’s become ingrained and so, in this respect, the diner is virtuous.

The virtuous person is not who sings, “I gotta be me,” or insists on being “authentic,” when what he is authentically is inappropriate. Rather, he should be singing, “I gotta grow up.”

On this model, a single sin in one’s past does not render the actor a perennially bad person. The Apostle Paul turned out well, despite his earlier role in the stoning of Stephen. Just as “one swallow does not a summer make,” “one buzzard does not a winter make.” So the 5-year track record enshrined in law made sense, even if Justice Hand’s take on the gravity of the murder in question was skewed.

It would be interesting to see whom the “good moral character” clause could disqualify today. Supposedly, it would rule out practicing felons, but how and when might they go deeper to search out possession of the virtues Aristotle and many others have identified? Might the US Citizenship and Immigration Services say, “I’m sorry, Mr. Jones, but we can’t accept gluttons with a bad temper. After all, we have the ‘good character’ standard.” Not likely.

__________________________

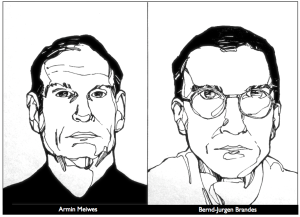

The Case of Armin Meiwes

The Case of Armin Meiwes

German Constitutional Court, Karlsruhe (2006)

Two German men, Armin Meiwes, a computer specialist, and Bernd-Jürgen Brandes, a computer engineer, met in March of 2001 for the purpose of cannibalism. Both men frequented a web site devoted to those keen on the notion of humans’ consuming humans, a site on which Meiwes posted an appeal for a willing victim. Brandes volunteered, and the subsequent home video of the event showed his assent to both mutilation and homicide. Both men attempted to consume a portion of Brandes’s flesh, and after Brandes had bled to death, Meiwes cut him up for cold storage. Over the next several months, Meiwes ate 44 pounds of this human meat.

The next year, Meiwes was again on the Internet hunt for a volunteer, and his appeal referenced the Brandes deed. When their deadly transaction and encounter came to light, Meiwes was arrested and convicted of manslaughter in a Frankfurt district court and sentenced to eight years in prison. But there was widespread dissatisfaction with this decision, and the German supreme court conducted a retrial, where Meiwes (nicknamed the “Rotenburg Cannibal”) was given life in prison.

This seems a fitting resolution to the affair, given the heinous nature of the crime, but those of the libertarian perspective are not so sure the court got it right. Indeed, they’re reluctant to applaud the lower court’s assessment of a lesser sentence, or of any sentence at all, for the act in question was performed by “consenting adults,” and thus was a “victimless crime.”

John Stuart Mill’s work, On Liberty, serves as the classic rationale for this perspective. He divides human acts into two groups – those affecting oneself and those impacting others. He argues that we should grant people the freedom to do the former, while reserving the right to intervene when they do the latter. By this standard, self-harm is none of our business; harm to others most certainly is.

For example, if I wish to shadow box in my back yard, I’m free to do so, but not in a rush hour subway car, where my punches would land on fellow commuters. And while I should be free to report that there’s a fire raging in faraway California, I must not cry “Fire” in a crowded theater, a declaration which could cause a deadly stampede. Free speech is essential, but not absolute in Mill’s system.

Translated to the current political arena, libertarians object to laws requiring motorcylists to wear helmets and to authorities who arrest heroin dealers. If people want to ruin themselves, that’s their business.

Of course, matters are often not so clear cut. The rider who dashes his head against a curb or the addict who sleeps away his days in the shadows of needle park become our problem in many ways, costing society in terms of lost productivity, heartbroken/broken families, medical and disability expenses, etc. Indeed, no man is an island.

And even if he stays functional, the damaging ripples from his choices are all too familiar. For instance, while one man keeps his sports wagering to a manageable minimum, the institution of gambling on games sets players and coaches up for compromise, whether on account of greed for bribes or fear from threats.

But, of course, there are rocks on both sides. If we set out to keep the culture clean from bad ideas and treat its citizens as children needing the firm hand of the nanny state, then we forfeit the genius of Western society and move toward the totalitarian agendas of Islam and Communism. Rather, we have chosen the free exchange of ideas (including those of Islam, Scientology, Atheism, and Satanism) as well as protections for unpopular, ridiculous, and even repellant behaviors (including gorging oneself to the point of gross obesity, tattooing smiley faces on one’s forehead, or bequeathing one’s entire estate to establish a cat hotel).

It’s Mill’s conviction that, as crazy as things might get, the clash of rival notions is more productive of cultural well-being and progress than the way of suppression. And the ubiquitous backwardness of nations who fear and despise freedom is testimony to the value of his thesis. Furthermore, there are biblical supports for liberty, including Paul’s statement in 1 Corinthians 5:11 that we “seek to persuade men” (and not coerce them).

So back to Messrs. Meiwes and Brandes. These men were fools in the full biblical sense of mental and moral degeneracy. But they didn’t force other Germans to take part in their revolting enterprise. Why not just leave them alone to “work out their damnation” while Christians set about to “work out their salvation,” a la Philippians 2:12?

Surely, though, as Theodore Dalrymple has written, those who prescribe freedom for Armin Meiwes reduce hard-core libertarianism to absurdity. And, strangely enough, Mill himself has provided a qualifier, which, however unwittingly, underwrites the cannibal’s sentence. For in the first chapter of On Liberty, he writes that his framework doesn’t apply to children or to “those backward states of society in which the race itself may be considered as in it nonage.” For “despotism is a legitimate mode of government in dealing with barbarians.” Indeed, “liberty, as a principle, has no application to any state of things anterior to the time when mankind have become capable of being improved by free and equal discussion.”

He surely had in mind illiterate and savage natives in far off climes – suitable subjects for British colonial paternalism — when he wrote this in Victorian days, but as Meiwes and Brandes have demonstrated that you don’t have to leave Western Europe to find headhunters and cannibals. We appear to be coming full cycle, from civilization back to barbarism, and Mill’s dream of the nation as a lively debating society must have limits when the participants descend so far into sociopathy that they’ve shown themselves rationally and ethically incapable of handling liberty.

So yes, the burden of proof should be upon the one who would infringe upon the liberties of others, but it is not an insurmountable burden when the harm to others and, indeed, to the most basic standards of humanity is egregious.

Worcester v. State of Georgia

Worcester v. State of Georgia

31 U.S. 515 (1832)

In the early 1800s, Samuel Worcester, a native of Vermont, was serving as a missionary to the Cherokees on their tribal lands in today’s northern Georgia. He was an agent of the American Board of Commissioners for Foreign Missions, which had grown out of a “Haystack Prayer Meeting” involving five Williams College students in 1806. Soon after graduation, they organized to send missionaries to India, most notably Adoniram and Ann Judson and Luther Rice, who subsequently became Baptists, as was William Carey who had come to India from England. (Their denominational shift led to the establishment of the first American Baptist missionary society, which became the Triennial Convention, from which the Southern Baptist Convention emerged in 1845 on the eve of the Civil War.)

Worcester ran afoul of Georgia authorities operating under new state law forbidding white men to live among the Cherokees without special license from the state. He and his missionary colleagues were convicted of illegal residence and sentenced to four years of hard labor. They appealed this ruling, and the U.S. Supreme Court took up the case, with chief justice John Marshall writing the majority opinion. He declared that the United States was the sole authority for legal dealings regarding the Cherokees, that the Cherokees were a nation under U.S. protectorate, and that the Indians had the right to determine who and who could not live in their midst. The opinion rehearsed the history of colonial settlement, including the settlers’ dealings with the Indians, and sketched protocols for interaction, including regard for their rights to property and political sovereignty, which, in the Court’s judgment, had been violated by the state of Georgia. Worcester was set free.

This makes for a fascinating demonstration case in the philosophy of law. According to Marshall, Georgia had failed to make valid law, in that they’d worked at cross- purposes with the U.S. Constitution. But what made the U.S. Constitution valid? Was it because of its moral superiority? Well, certainly, Marshall spoke to ethical matters, recounting the national practice of land purchase and treaty, not brute seizure and subjugation. But there are all sorts of wonderful policies we might imagine which do not constitute law. Just because it’s a good idea, it doesn’t follow that the citizens must fall in line with it dutifully. Lincoln’s 1863 Emancipation Proclamation was a long time coming, and slavery was legal for ages. Indeed, law can be unsavory yet authoritative, as was shown to be the case in 1857, when the Dred Scott decision upheld the Fugitive Slave Law and disallowed the Underground Railroad, which was spiriting slaves to freedom in the north.

There are those in the natural law tradition who say that immoral law simply fails to be law, but most legal philosophers are inclined to say that immoral laws are nevertheless laws, though they should be changed and may well warrant civil disobedience. On this model – legal positivism – the content of law is determined by the authorities, whether kings, legislatures, or other magistrates. (A variant of this holds that it is not so much what’s on the books but what the officials will actually enforce, e.g., the speed limit is more nearly 75 than the published 70; it’s called “legal realism,” but it too depends upon the actions of officials, not the ethical winsomeness of their choices.)

This all raises the question of what, then, makes one a magistrate, able to make law. Is it moral grandeur, high IQ, or genealogy? No, for we can think of any number of ethically challenged, sub-genius, non-aristocratic men who became rulers. So what makes them the ones in charge?

As harsh at it may sound, the answer is power, and not just a measure of power. The power must be coercive, able to dominate. In the case at hand, it derived from the fact that the United States had military and police forces superior to those of Georgia (a fact born out by subsequent battles in the Civil War, compliments of General William Tecumseh Sherman). That’s what made the Worcester decision stand.

But that’s not the end of the story. Though Marshall had declared the Cherokee territory a nation, he was powerless to override the executive power of President Andrew Jackson, an old Indian fighter and hero of the Battle of New Orleans in the War of 1812 with the British. “Old Hickory” pushed through a policy of Cherokee removal, forcing them to take a “Trail of Tears” to new land in Oklahoma. He had the “coercive sanctions” necessary to back up this displacement of a “nation” from the east to the west side of the Mississippi.

As wrong as this may be, it shows that while “might does not make right,” might does make property. Homeowners today in Dahlonega, Georgia, deep in the heart of the former Cherokee nation, do not need today to surrender their lands to Oklahoma Indians seeking reinstatement of their property rights. These contemporary residents bought their houses and lots fair and square, as did the folks they bought them from. And so on back through the decades. So what was the base point for “fair and square”? The U.S. policy which put Worcester’s former mission field on the market for whites to purchase.

Of course, it’s not clear whether the Cherokees themselves acquired their north Georgia land in the purest of fashions. There may have been other native peoples residing there who themselves were pushed out or even killed by the Cherokees. It makes for interesting historical research, but it doesn’t change the fact that those now in charge say the land no longer belongs to these pre-Cherokees either.

Of course, this cuts both ways. If the U.S. decided to reverse the Trail of Tears and reestablish the Cherokee nation and they had the power to enforce it, that too would be the law. That’s what law does, for good or ill — hence the need to be thoughtful and decent in the framing of law going forward, whatever the past may be.

As a side note, its interesting to consider what sort of chaos we’d see in the Middle East if we tried to redistribute the land on the basis of original possession, ameliorating all wrongs committed in subsequent conquests. It certainly wouldn’t go to the Palestinians, whose ancestors gained advantage and possession by Muslim Arab conquest. And the Jews would have to give way to the Jebusites or other Canaanite inhabitants, from whom they seized territory. The only reasonable approach is to say the land belongs to whom the land belongs to today by authority of the government. (Just ask the disgruntled, relocated homeowner who had to sell his land under the power of “eminent domain” to make way for an interstate loop.)

__________________________

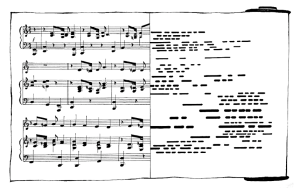

White-Smith Music Publishing Company v. Apollo Company

209 U.S. 1 (1908)

In the opening decade of the 20th century, the White-Smith published sheet music from two compositions by Adam Beibel, Little Cotton Dolly and Kentucky Babe. The company producing a player piano called the Apollo made piano rolls of the songs. White-Smith sued for copyright infringement – and lost. The court said that, under current law, a copy was a tangible object that could be mistaken for the original, and that a white paper roll with holes in it could not be confused with a white paper sheet with ink marks on it. (The carefully spaced holes in the piano roll allowed jets of air to pass through, thus triggering the mechanism to sound particular notes.)

Attorneys for White-Smith had objected that the intention of copyright law was “to protect the intellectual conception which has resulted in the compilation of notes which, when properly played, produce the melody which is the real invention of the composer.” The court insisted, though, that such a “musical creation which first exists in the mind of the composer” is “not susceptible of being copied until it has been put in a form which others can see and read.” And in this case, what they could see and read was notes on a page. Indeed, it would be a very rare bird who could sight-read a piano roll.

Of course, copyright law has evolved and has had interesting tests in recent days over the property status of lines of computer code, but our concern here is with the metaphysics of the discussion. (Of course, matters of “intellectual property” bring questions of justice into play, but we’ll focus here on philosophical questions of reality.)

For one thing, what’s the status of an “intellectual conception?” Does it exist when no one has it in mind? If so, where? Take for instance, the opening notes of Beethoven’s 5th – Duh, Duh, Dun, Dum; Duh, Duh, Duh, Dum. What if no one is performing or humming it, if all the sheet music is destroyed along with all the recordings, and if no one is even thinking it? Does it no longer exist? Or is it just out there, waiting to be recalled?

Furthermore, we may ask whether Beethoven created the tone sequence or rather discovered or came upon it. After all, if God knows all possibilities, physical and mental, and he knows the future, then this musical “ring tone” must have been in his mind long before Beethoven came on the scene. And, on this model, it would continue to exist if all earthly memory, reproductions, etc. were obliterated – exist, that is, as a concept without physical instantiation.

All sorts of theologians and philosophers have joined in the discussion (not of Beethoven’s 5th in particular, but of the “ontological” [science of being] issues). Plato is a prominent case in point. He taught that there were non-material “Forms” (e.g., justice; friendship) which existed eternally and which we knew in our pre-earth life. His teacher Socrates claimed to be a mid-wife who delivered his students’ idea babies, the healthy ones originating in their earlier, clear-headed days. He worked by questioning – the Socratic method – with the conviction they already knew the important things and that they could remember them if they’d only following his lead in chipping away the stupidity that had bedeviled them in their present, troublesome bodies.

A lot of Christians, including St. Augustine, have found much to like in Plato, though not his teachings about pre-existence. They’re pleased to locate the ideals of courage, knowledge, etc. in God’s mind, secure there when cowardice and ignorance are rampant on earth. But the raises question of whether these concepts are co- eternal with the Trinity, along with mathematical verities such as 2+2=4. That can sound impious, even heretical, as though there were other everlasting entities in the universe, even if there were only intellectual.

Some insist that those who wrangle over such abstractions are wasting their time. Some would ask, “What difference does it make if Ludwig hatched it or grasped it?” Some would say, “There’s no way to settle this, so let’s just quit talking about it; endless, irremediable disputation is toxic.” Yet, that puts us onto another metaphysical question, or perhaps we should say a meta-meta-physical question, concerning this creature called man is constitutionally able to wrestle mentally with the ultimates.

_________________________

Owen v. Crumbaugh

Owen v. Crumbaugh

81 NE (Ill.) 1044 (1907)

The nephews and nieces of affluent Illinois banker and landowner James T. Crumbaugh were indignant that the family had been slighted in his will. Crumbaugh’s wife and only child had died, and the estate would normally have flowed to his siblings and their offspring, but he went in a different direction, endowing the construction and sustenance of a Spiritualist church and a public library. The relatives argued that the will was based on a falsehood, namely Crumbaugh’s declaration that he was in his “right mind and natural mind” when he wrote it. They claimed that anybody who believed in communicating with the dead through séances was mentally unfit to bequeath anything and that distribution of his wealth should be determined by the courts, which gave preference to close kin.

Deciding in Crumbaugh’s favor, the Supreme Court of Illinois rehearsed his life and faith, drawing on the testimony of dozens of witnesses in the trial court, and reviewed the law of competency, including one of their own precedent decisions. They concluded that allegiance to a curious religious sect was not enough to declare one delusional.

Actually, Crumbaugh himself once thought the Spiritualist faith ridiculous, but he came around at his wife’s urging and in response to some dramatic experiences of his own. Most striking was what he took to be communion with his son, who died in infancy, but who now lived maturely as “Bright Eyes” in the “spirit land.” He was convinced that Bright Eyes had intervened to rescue him on several occasions, wherein he narrowly escaped damage from a flying object, a fire, and a fall.

Though the Spiritualists trafficked in alleged “clairaudience,” that is “clear hearing, rapping, moving of tables or moving of furniture, trumpet séance, materialization, writing, spirit healing . . . and spirit photography,” the court said they were in no position to draw a firm line between acceptable and unacceptable belief and practice, whether Baptist or Swedenborgian, whether treasure hunting with a small metal ball suspended on a thread or water searching with a forked wooden stick. When such luminaries as Sir William Blackstone, Sir Francis Bacon, John Wesley, Martin Luther, and Cotton Mather believed in witches, how could the court disallow a will on grounds that the subject had strong convictions regarding the supernatural?

Instead, they appealed to standards hammered out in previous cases, decisions that spoke of insanity in terms of a conception arising “spontaneously in the mind, without evidence of any kind to support it,” of “something extravagant” which “has no existence whatever but in his heated imagination,” and of a person “incapable of being permanently reasoned out of that conception.” Besides, Crumbaugh was a model citizen and excellent businessman, and critics would need to prove his madness was isolated, a conceit the courts didn’t buy.

Philosophers have a similar challenge – determining what counts as rational – but not in isolated cases, but as an everyday affair. They don’t appeal, as philosophers, to scripture to make their case. Neither can they settle matters by pointing to test results in the manner of metallurgists, electrical engineers, or geneticists. Rather, they work “in sales” at a level of abstraction that depends upon the audience’s hunger for coherence, consistency, palatability, splendor, wholesomeness, simplicity, fellow-feeling, and other such desirables, which may or may not be in play in the culture. Their stock in trade is the reductio ad absurdum (“reduction to absurdity”), which presses proffered principles and proposals to the breaking point, if such can be found. Socrates was a master at this, as have been good philosophers through the ages. For instance, when one says there’s no right and wrong, that morals are relative, then a critic might say, “Well, then, I suppose it’s okay to murder you, right?”

Most would balk at agreeing to this, and would back up to abandon or qualify their initial claim. But there’s the rub. Some won’t. They’ll double down on what strikes most to be dumb, and you can’t break the impasse by quoting the Sixth Commandment or detailing the physiology of death. Rather, you leave it to whatever audience there might be to roll their eyes and discount his value as a thinker. Maybe you’ll say something gracious like, “Well, we’ll just have to agree to disagree,” but you’re thinking, “This fellow’s a Crumbaugh before whom I shall not cast pearls.” More likely, you’ll back up and try another line of argument, trying to undermine the fellow’s conviction with an analogy, a piece of definitional surgery, or another implication.

Fact of the matter is, these impasses are everywhere in philosophy, and so philosophers break down into schools of thought, wherein they can find aid, fellowship, and comfort. It’s the sort of thing you find among doctors, where allopathics are not much impressed with homeopathics, and chiropractors and acupuncturists live in separate rooms of their own, each gainsaying the rationality of the other. But philosophy departments typically have a mix of each – analytical, continental, eastern, existential, etc. – with more or less grudging courtesies extended as they patronize the others’ “wacky” enthusiasm for Anselm, Leibniz, Hegel, Berkeley, Wittgenstein, Kripke, Quine, or Zizek.

So why bother with philosophy at all, given the lack of common, much less universal, standards of rationality? Because the issues are too important to ignore, and because God gave us reason, of which we are stewards, even if others don’t appreciate our stewardship. Still, it can be a sweet thing to find accord with those who share your sense of what is absurd or what is plausible in the realm of big ideas. And, along the way, you may discover that you’ve been entertaining an absurdity unaware, and your former detractors can prove to be your deliverers, at least at one point or another.

____________________________

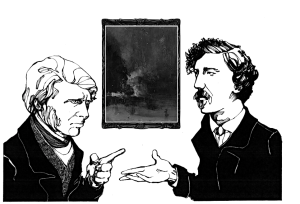

Whistler v. Ruskin

Court of Exchequer, Division at Westminster (Nov. 25-26, 1878)

In 1875, the American painter James Abbott McNeill Whistler (as in “Whistler’s Mother”) exhibited, in a London gallery, a painting he called Nocturne in Black and Gold: The Falling Rocket. It was a dark, impressionistic depiction of a fireworks show, with vague human figures in the foreground. John Ruskin, who held a chair in fine arts at Oxford and was the nation’s most respected critic, lambasted the gallery owner, the painter, and the painting, speaking of “ill-educated conceit,” “willful imposture,” “Cockney impudence,” and a “coxcomb (pretentious fop).” He expressed dismay at hearing they were asking “two hundred guineas for flinging a pot of paint in the public’s face.”

Whistler sued him for libel and won, but only a farthing (the smallest of coins). The loss drove Ruskin into depression, and the expensive victory, which didn’t cover court costs, drove Whistler to flee English hostility for a more hospitable and pecuniarily viable Venice.

The painting incensed Ruskin since it violated what he and most understood to be the proper standard of art, that its subject be both recognizable and edifying, and that its craftsmanship be unmistakable. Whistler had the temerity to “water down” his oils, deliver a “slapdash” image, and flirt with abstraction. It just didn’t qualify as “serious art.” It disposed the viewer toward a “retarding and vulgarizing” form of “self-satisfaction,” (to use Matthew Arnold’s earlier, popular expression), the sort of thing that coarsened and even destroyed culture.

While the Brancusi case (noted elsewhere on this site) addressed the definitional boundaries of art itself, the Whistler-Ruskin case dealt with the question of what made art good or bad – aesthetics. And, as in the days of that trial, the disputes can be vicious. Witness, for instance, the mix of cruel and ecstatic commentary on fashion choices for the Oscars carpet. It’s not enough to say you prefer x over y; rather, you get TV time for declaring y to be “vomitous” and x “a stroke of genius.”

Among the dismissive expressions is “kitsch,” variously defined as “tasteless,” “pathetically sentimental,” or “tawdry.” It’s a good way to write off what you don’t like, and what you don’t think others should like, e.g., the Precious Moments Chapel in Carthage, Missouri, or a Thomas Kinkade painting. But why should critics care if we drive miles out of our way to see the former and pay hundreds or thousands of dollars to acquire the latter? What’s really at stake? And isn’t beauty simply in the eye of the beholder?

Indeed, the word, ‘beauty’ (as in ‘truth, goodness, and beauty’) is central to the dispute. Are there universal standards of taste (as there are objective standards of verity and morality) to which we should hold ourselves accountable? Or is this simply a matter of harmless subjectivity, where you like Rocky Road, and I like Pralines and Cream, and it’s no big deal either way?

Well, even if you think there are universal standards, you might not center your aesthetic on beauty. I prefer to speak of ‘fascination,’ for there are some magnificently ugly paintings (e.g., the Ivan Albright painting of Ida or of the shirtless old man in a bowler) that warrant and reward our attention and respect. And those who say that truth, goodness, and beauty are cut from the same cloth miss the point that something can be bogus and decadent while aesthetically engaging. Aesthetic goodness does not mean moral goodness. Indeed, the Bible warns against being deceived by appearances. (And, of course, it can work the other way, with a wonderful, true message presented in a clumsy or boring way.)

Of course, ‘beauty’ is a broad term, grander than ‘pretty,’ and like ‘good,’ we could treat it as the most general term of aesthetic approbation, much as we treat ‘good’ as the most general term of all approbation, whether regarding a good mother, a good hammer, or a good portrait. Terms of beauty can apply to an “elegant” mathematical theorem or “lovely” act of sacrificial kindness. But again, a beauty-centered aesthetic can drive one to reflexively favor a Van Huysum still life with flowers and a Bierstadt Western landscape over Gericault’s The Raft of the Medusa and Munch’s The Scream.

The literature on aesthetic standards is rich and contentious, from Joshua Reynolds’ insistence on a “pleasing effect on the mind” to Roger Fry’s “significant form,” from David Hume’s inter-subjectivity to Leo Tolstoy’s communitarian ideal. Some critics major on line, mass, rhythm, light and shade, space, color, etc. Others favor novelty or social impact. Some love narrative; others hate it. Some look for signs of seasoned and fastidious virtuosity; others couldn’t care less, even celebrating the swirls and streaks of an ape’s or pig’s paintbrush. Is it process or product or impact or context? And so it goes.

No, aesthetic matters aren’t everything, but they’re not nothing either. They bear thought, investigation, and articulation, so that we might know how to talk helpfully when the zoning board is considering, for our neighborhood, a house that looks like a shoe; when the church building committee is proposing a paisley carpet for the auditorium aisles; when the new music minister favors polka for Sunday worship; and when the senior adult potluck includes a durian dish.

_________________________

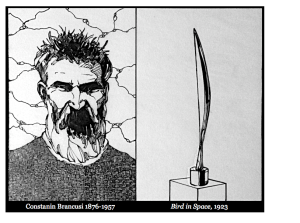

Brancusi v. United States

54 Treas. Dec. 428 (Cust. Ct., 1928)

In the 1920s, Paris-based Romanian sculptor, Constantine Brancusi sent a piece of sculpture named Bird in Space to America for display. He expected it to enter tariff-free as a work of art, but customs officials said it didn’t qualify. Rather, they classified it as a metal tool, along with kitchen and hospital supplies. The problem was the government’s working definition of sculpture – “reproductions by carving or casting, imitations of natural objects, chiefly the human form.” It didn’t look like bird, so they assessed him a 40% import charge, in effect saying, “That isn’t art!” Drawing on the testimony of expert witnesses from the art world, the judge ruled that the state’s definition was out of date, and that it needed to be adjusted to accommodate abstract art, whether or not people liked it. *

The issue fell nicely into the ancient philosophical “What is it?” form, brought to high art itself by Socrates and his student Plato. While the first philosophers (the “pre-Socratics) focused on big-box cosmology (suggesting that the universe was essentially water, the Boundless, flux, etc.), Socrates brought discourse down to the concerns of human life in a series of dialogues, e.g., knowledge (Theaetetus); justice (Republic); courage (Laches); friendship (Lysis); love (Symposium). (Plato wrote them up, and a lot of scholarship has gone into saying how much was real Socrates and how much was Plato putting words in his mentor’s mouth.)

So sweeping and exemplary was this collection of exchanges that 20th century philosopher, Alfred North Whitehead, said, in Process and Reality, that the “European philosophical tradition” was best typified as “a series of footnotes to Plato.” Basically, Socrates would prompt an interlocutor (victim) to offer a definition of a great theme, such as piety/righteousness in Euthyphro. Sure enough, the student would give it a shot, e.g., “What the gods endorse.” Then Socrates would raise a troublesome question — “But what if they disagree among themselves?” – and they would be off to the dialogical races. We’ve been doing the same thing for millennia as we’ve grappled with the same issues and others.

Many have asked, “So why should we bother if famous minds haven’t settled things?” And the traditional and correct answer is that you’ll be using unavoidably an implicit definition of one sort of another, and it’s not a bad idea to get clear on what exactly it is, and then think it over. It has practical implications, as Brancusi discovered.

Turns out, Socrates and Plato’s view of art was pretty close to that of the U.S. authorities. They spoke of it in terms of mimesis, “imitation,” and, therefore, didn’t much appreciate it. Believing in the existence of fixed ideals, they counted earthly horses, beds, and such as more or less faithful copies of the transcendent models. Now, if someone ventured to draw a horse or bed, he’d be making a copy of a copy of what was itself a copy, resulting in something third-rate. (Think of the degradation of quality when you keep copying successively older copies on a Xerox machine.)

Of course, this definition, mimesis, works better for sculpture and painting than music and architecture, but it’s not aged well as a definition for any of them. Indeed, through the centuries, a range of definitions have vied for respect, e.g., expression; “significant form.” But artists, being a creative and often transgressive breed, have continually pushed the boundaries.

One famous/infamous example was a porcelain urinal appropriated from a discard pile by Marcel Duchamp. He wrote “R. Mutt” on it and entered it in a competition. They didn’t buy it, but the world has done so since then, featuring this “ready made” in many art history books. And that’s just the start. Now we’re asked to accept a pile of Jolly Rancher candies dumped into a corner along with the invitation to take one, unwrap and eat it, in memory of someone who died from AIDS. Or to cooperate with handwritten instructions to imagine the sands of an hourglass completing their fall in a minute or two; so you stand there letting the conceptual sand fall in your mind. (I draw these examples from visits to the generally admirable Art Institute of Chicago.)

To accommodate one curiosity/perversity/ingenuity after another, some have abandoned the Socratic/Platonic project of discerning the essence of the thing. They say it’s a fool’s game, for there’s no property in the thing itself that serves to qualify it. So, under the influence of Ludwig Wittgenstein, channeled by Morris Weitz, they’ve moved to a sociological definition, calling art an “open concept,” whose ever-changing boundaries are determined by the “Artworld” – the “club” of artists, professors, curators, critics, and publishers who make the calls.

I still think the old project is worth the trouble, and, at this point, here’s my working definition: Art is that aspect of personal activity undertaken to charm the beholder, whether or not it transmits truth or prompts goodness. That takes a lot of explaining, but for starters, it excludes colorful rust patterns and spider webs, for neither oxidating iron molecules nor spiders are persons. (The only sense in which they are art is as works of the Supreme Person, God.) And it leaves open the question of whether the art is well done, decent, edifying, etc.

Yes, it can make conceptual room for the Jolly Ranchers and imaginary hour glasses, but it doesn’t have to praise them. And it doesn’t have to wait for the elites to acknowledge an item or practice in their journals, galleries, and syllabi in order to be certified. There can be art in the staging of tractor pulls as well as in the composition of symphonies. But more on that later.

___________________

* See MaryKate Cleary, “ ‘But Is It Art?’ Constantine Brancusi vs. the United States,” Inside/Out, Museum of Modern Art (July 24, 2014). Accessed July 9, 2015, at http://www.moma.org/explore/inside_out/2014/07/24/but-is-it-art-constantin-brancusi-vs-the-united-states.